We are incredibly delighted to announce the release of Spaceport 1.2 bringing with it a range of improvements to capture Volumetric Video. If you haven’t heard about Spaceport-Volumetric Video before, you can read the previous entries:

– Part1: Overview of the Spaceport Volumetric Video Capturing and Streaming

– Part2: Spaceport Volumetric Video Container Structure

– Part3: Spaceport Volumetric Video Live Streaming

– Part4: Spaceport 1.0

Version 1.2 includes a lot more bug fixes and features like audio support and improvements. You can view the full change-log on the official release page on Github-Wiki.

New Volumetric Video Features

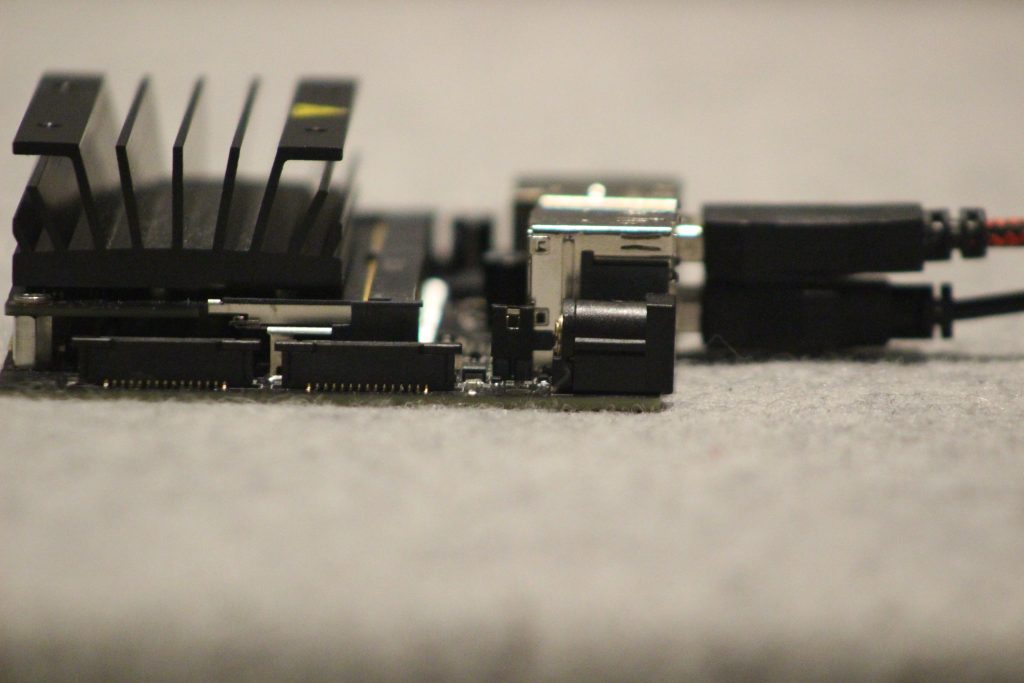

Compatible With Jetson Nano DK

Jetson Nano is a small, powerful computer of the NVIDIA Jetson family for embedded applications and AI. Spaceport 1.2 introduces the Jetson Nano support to capture volumetric video. The new publishers allow you to easily integrate Jetsons into your Volumetric Capture Setup. This is a great chance to remove computers from the pipeline. We save you from wire and cable clutter or needing too much space.

How To Publish With Jetson Nano:

The following installation steps were tested on a Jetson Nano, but can work to any Jetson Platform (TX2, Xavier) with Ubuntu.

Step 1: Set up the Jetson Nano and configure packages.

sudo sh -c 'echo "deb http://packages.ros.org/ros/ubuntu $(lsb_release -sc) main" > /etc/apt/sources.list.d/ros-latest.list' sudo apt-key adv --keyserver 'hkp://keyserver.ubuntu.com:80' --recv-key C1CF6E31E6BADE8868B172B4F42ED6FBAB17C654

Step 3: Update the packages

sudo apt update

Step 4: Install the ROS from packages

sudo apt install ros-melodic-desktop-full

Step 5: Update your .bashrc script:

echo "source /opt/ros/melodic/setup.bash" >> ~/.bashrc source ~/.bashrc

Step 6: G Get Spaceport Jetson Nano Publisher

Step 7: Run the following command to test the publishers

cd binaries bash launch.sh

Texture Improvements to Avoid Different Light Conditions

This is one of the most awaited features since we first launched Spaceport 1.0. In our default setup, we are using six Azure Kinect Sensors. Six Kinects capture images with 6 different angles. In such a system, each camera naturally records under different light conditions.

It is often difficult to create a homogeneous light field if you are not working in a studio. One of the major benefits that bring with Spaceport 1.2 is its flexibility. Now when you capture the frames you can easily correct for varying illumination conditions.

so please let us know what you think

Audio Support

As I’ve already mentioned our efforts are currently focused on creating an end-to-end solution to capture volumetric video. So we continue to improve build-in features in Spaceport. We have added a new audio class that allows record raw audio with any input device. Just add the following command to the run script and then you are ready to record audio.

Consequently

So, what can we expect next? We’ll soon release our goals for version 1.3. We would love you to be part of building Spaceport’s roadmap. You can leave a comment, reach out on social media, or simply drop us a message at spaceport.tv to share your thoughts and ideas.

That’s all for today, stay tuned for more updates!