One of the most common use solution that a media server offers is video conference. Online classes, online meetings, and other use cases which require real-time multi-party media communications are different applications for the conference. All of these usecases reuire interaction among users, so the media server should provide as ultra-low latency as much possible as it does. Ant Media Server (AMS) can provide less than 500ms latency with WebRTC. AMS has been providing all necessary streaming infrastructure for the conference developers. Now we have a good news. Ant Media provides a ready to use conference solution for everyone: Circle.

Companies want to have their own on premise communication platform to keep data internal network. AMS as a media server can be used on premise or cloud. Circle which is a complete video conference solution on top of AMS, can be installed on on premise or cloud AMS streaming system.

Besides the latency, video conference solutions have some other difficulties because of their N-N nature where N people can communicate with N-1 people. Scalability and resource usage are the most important ones to solve for a good conference solution.

Scalability is one of the main factors which is always considered in AMS design. AMS scalable implementation which is provided by the AMS cluster works also with video conference solutions. But there is a small difference. In a video conference cluster, you don’t have to make origin and edge distinctions. Because, one participant connected to a cluster node, publishes his stream to that node and plays others from the same node. So a node is responsible for both publishing and playing while hosting video conference participants.

The other difficulty, resource (especially CPU) usage, is the other factor that directly affects the quality and cost of the solution.

AMS has been supporting SFU conference solutions and MCU output for the conference rooms for a long time. Recently, we have had several optimizations in the new conference solution and I will tell them in the next part.

Video Conference Server Side Optimizations

The following optimizations decrease the CPU usage on the server side. Before those optimizations, I want to tell you the load estimations for an SFU room.

For an N-participant video conference room, since each participant publishes their stream and plays the others, there are N streams and Nx(N-1) viewers on the server. Also, each viewer will play both video and audio tracks for each participant. So there will be Nx(N-1) video tracks and Nx(N-1) audio tracks.

For example, if there are 100 participants in a video conference room then there will be 9900 video and 9900 audio playback tracks on the system. Please keep in mind these numbers, we will decrease them soon.

Less Audio Tracks in a Video Conference and Performance Optimization

The main idea in this optimization is very natural actually. If there are more than 2 or 3 people talking in a room, then the communication can’t be effective, in other words, nobody can understand the discussion. So no need to create as many audio tracks as the number of participants. Instead, with this optimization, AMS creates a limited number of audio tracks for each participant to play others’ audio. Then it shares this limited number of audio tracks with the talking participants according to the audio level. 3 tracks seem to be a good number. But it is also configurable with the maxAudioTrackCount setting.

With this setting, if you set the max audio track count to n then the total audio track count will be Nxn instead of Nx(N-1).

For our 100-participants room example, if we set the max audio track count to 3 then there will be 300 audio tracks instead of 9900. It really decreases connection count CPU usage.

Bandwidth saver in Video Conference by Video Track Optimization

This optimization is similar to the audio track count limitation. You can limit the maximum number of video tracks with maxVideoTrackCount. When you limit the video track count, you can see players on your screen as many as this number. So this number also determines the layout or view on the screen. Different than audio track limitation you can change the max video track count on the fly with a setMaxVideoTrackCount method in JS SDK. AMS shares a limited number of video tracks with the participants according to the audio level and talking order. The last talking participant will be presented in one of the limited numbers of players on the screen.

If you set the max video track count to m then the total video track count for the N-participants room will be Nxm instead of Nx(N-1).

For the 100-participants room example, if we set the max video track count to 5 then there will be 500 video tracks instead of 9900.

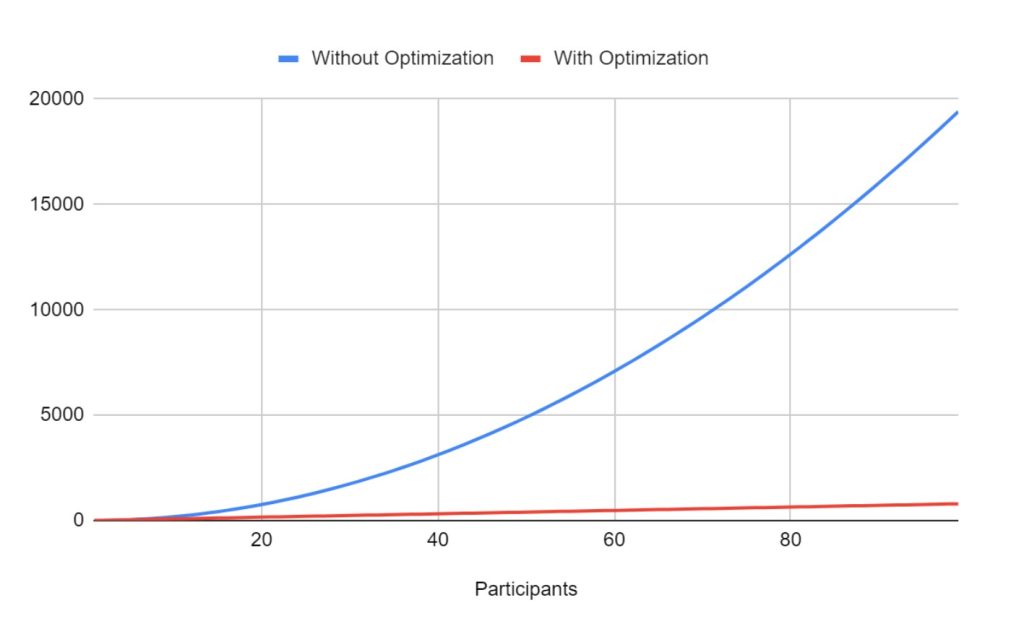

You can see the affect of the optimization with the increasing number of participants.

Optimization Result in Video Conference

Client Side Improvements

Besides the optimizations on the server, there are some improvements on the client side that affect both client and server-side resource usage. For these improvements, the data channel should be enabled. Because participants periodically share some information with each other through the data channel. This information is the pinned participant id, screen share status, and camera/mic status.

Dynamic Resolution and Bitrate

If nobody plays a participant in full screen(as pinned), then no need to send high-quality video from that participant. But if someone pins a participant, then he should send the higher-quality stream. So according to the pinned status on someone’s screen, the participant can change his resolution and bitrate. JS SDK has applyConstraints and changeBandwidth methods to change video resolution and bitrate.

Pinning Participants

JS SDK provides assignVideoTrack method to pin a participant, and assign one of the video players on the screen to that participant. In this case, the corresponding video track for that player won’t be shared with the other participants anymore. With the same method, you can cancel the assignment. Also as told in the previous topic, pinned participant video quality can be increased automatically.

As a best practice when someone shares a screen, he notifies this information through the data channel to the others and they pin him automatically.

Pagination of Participants

As previously told, there will be a limited number of players on the screen with the number of max video track count. So if you have participants in the room more than this number you cannot see every participant in a single window. So you can do 2 things. You can increase the max number of video tracks as told before and increase the number of players on the screen. Or you can paginate the participants’ videos with the updateVideoTrackAssignments method in the JS SDK.

Data Channel Interface Between Server and UI

With the optimizations, the server decides the assignment of the audio and video tracks to the participants, it should notify the UI side about these decisions. Since these decisions can change in milliseconds as participants are talking, we use WebRTC data channel messaging between the server and JS SDK instead of the available WebSocket. Because the data channel provides low-latency data transfer.

Using WebRTC data channel interface, Server sends the audio and video track assignments to the UI to show who is talking at that time.

New Video Conference UI: Circle

Besides these server and client-side optimizations, we also announce a new conference UI which is already adapted to these optimizations. So this new UI not only provides a good user experience for the end users but also provides a code basis for the developers. It is open source and fully adapted to the new optimization on AMS. You can access the GitHub repository from here.

New Conference UI

Circle Installation

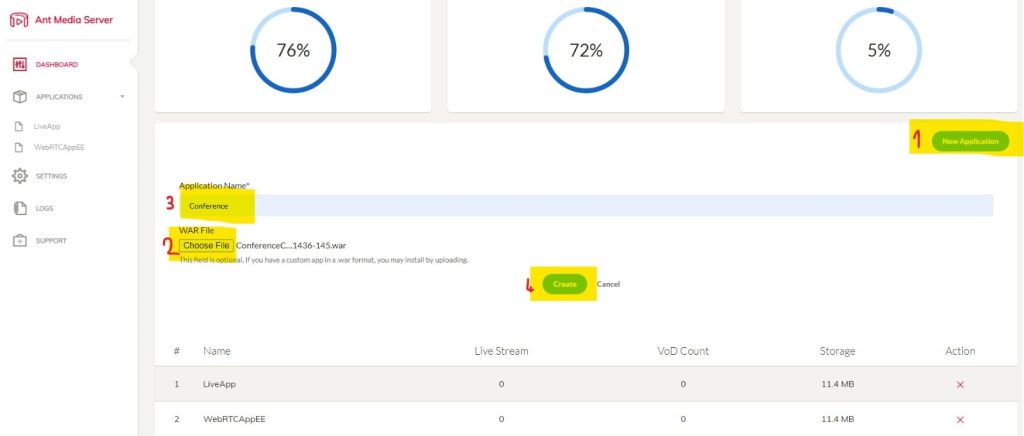

The new conference UI is developed as a streaming application on AMS and deployed as a single war file. You can install it as an application from AMS Management Panel. First, download the latest war file. On the Dashboard page click the New Application button, click Choose File button browse to the downloaded war file. Give a name to your application and click Create button. That’s all.

Adding Conference App

Enjoy your new Conference Service.

Try Ant Media Server for Free

Explore Ant Media Server now to provide viewers with a unique experience.

Try Ant Media Server for free with its full features including Flutter and other WebRTC SDKs.