WebRTC servers play a central role in powering modern real-time communication applications, including voice and video calling, interactive broadcasting, screen sharing, and data exchange. While WebRTC (Web Real-Time Communication) enables direct peer-to-peer communication between browsers and apps, servers are essential for handling key functions such as signaling, NAT traversal, media relaying, recording, and scaling to multiparty scenarios.

In this comprehensive guide, we’ll explain what WebRTC servers are, why they are needed beyond basic browser-to-browser connections, the different server types involved in a WebRTC architecture, and how to choose and set up the right server for your real-time communication use case — whether that’s a video conference, live streaming platform, or interactive application.

If you’re new to WebRTC, you might want to learn what WebRTC is and how it works before reading any more of this post.

Table of Contents

Introduction

WebRTC is the foundation of real-time communication in modern web applications. It powers voice and video chat, screen sharing, and data transfer — all directly between browsers or apps. But to build scalable and secure applications, you often need a WebRTC server.

In this guide, you’ll learn:

- What a WebRTC server is

- Different types of servers involved in WebRTC

- Multiparty topologies like SFU and MCU

- How to choose the best server for your needs

- How to install a free WebRTC server using Ant Media

Let’s start with the basics.

What is a WebRTC Server?

A WebRTC Server is a component that facilitates WebRTC sessions. While WebRTC enables peer-to-peer communication, servers are often required for:

- Managing connections (signaling)

- Traversing firewalls and NATs

- Relaying or processing media

- Enabling multiparty communication

Depending on your use case, a WebRTC deployment can include one or several of these server types.

Types of WebRTC Servers

WebRTC servers are generally classified into four categories:

1. WebRTC Application Servers

WebRTC application servers are traditional app servers that host your WebRTC-based application. Examples include Node.js, Java, or PHP-based servers where the business logic or UI is managed.

2. WebRTC Signaling Servers

Signaling servers are responsible for session negotiation. They help clients discover each other, exchange session descriptions, and agree on how to establish the connection. Protocols like WebSocket or SIP are typically used.

3. NAT Traversal Servers

Network address translation traversal (NAT) is a computer networking technique of establishing and maintaining Internet Protocol connections across gateways that implement network address translation (NAT).

These include:

- STUN servers – Help devices discover their public IP and port.

- TURN servers – Relay media when peer-to-peer communication fails. They ensure connections work across different network configurations.

4. WebRTC Media Servers

A WebRTC media server is a type of “multimedia middleware” (located in the middle of the communicating peers) through which media traffic flows as it passes from source to destination. Media servers sit between participants and handle tasks like:

- Media stream routing (e.g., SFU)

- Media mixing (e.g., MCU)

- Transcoding (codec adaptation)

- Recording and storage

WebRTC Media Servers are essential for group calls, recording sessions, or adapting streams based on network conditions.

Many popular WebRTC services are hosted today on AWS, Google Cloud, Microsoft Azure, and Digital Ocean servers. You can embed your WebRTC media into any WordPress, PHP, or other website.

What Makes a Good WebRTC Server?

When choosing a WebRTC server or media platform, consider:

- Scalability: Can it handle large numbers of viewers or participants?

- Latency: Does it offer low or ultra-low latency?

- Customizability: Can it be tailored to your workflow?

- Ecosystem: Are SDKs, plugins, and support available?

- Deployment: Does it support cloud, on-premise, and hybrid options?

What is the best server for WebRTC?

If you’re not too familiar with WebRTC servers, you might be asking yourself questions like, “Does WebRTC use a server?” or “What is a media server?” Let’s clear that up and confirm what exactly a WebRTC server is.

A WebRTC (Web Real-Time Communication) server is a specialized server or software component used to facilitate real-time communication between devices, typically through web browsers or mobile applications.

So determining the “best” WebRTC server also depends on your specific project requirements and priorities, as the software or components that are used to build your WebRTC server need to be considered.

Here is a list of some popular WebRTC servers that have their unique strengths and use cases that you’d want to consider when choosing a WebRTC server.

Popular WebRTC Servers Compared

| WebRTC Server | Best For | Strengths | Consider If… |

|---|---|---|---|

| Ant Media Server | Live streaming, low-latency video, video conferencing | Scalable, plugin-friendly, multi-protocol, transcoding, open-source | You need fast setup and sub-second latency |

| Jitsi Meet | Video conferencing, collaboration | Simple setup, supports multi-user | You want ready-made conferencing |

| Kurento | Media transformation and recording | Advanced media processing | You need real-time filters and computer vision |

| Mediasoup | Performance-critical applications | Low-level API, full control | You want fine-tuned media delivery |

| Asterisk | WebRTC and VoIP hybrid | Great for telephony integrations | You’re integrating WebRTC into PBX systems |

| Janus | Custom media routing | Modular, plugin architecture | control and extensibility |

To choose the best WebRTC server for your project, consider factors such as your project’s use case, scalability needs, development expertise, budget, and the level of support and documentation available for each option.

Additionally, you may want to conduct feasibility studies or small-scale testing to determine which server aligns best with your specific requirements.

Ultimately, there is no one-size-fits-all answer, and the “best” choice will depend on the unique needs and goals of your WebRTC application.

Now that you have some idea of how to choose a WebRTC server for your project, let’s go compare three different WebRTC network types depending on the components used in the system and the way they interact.

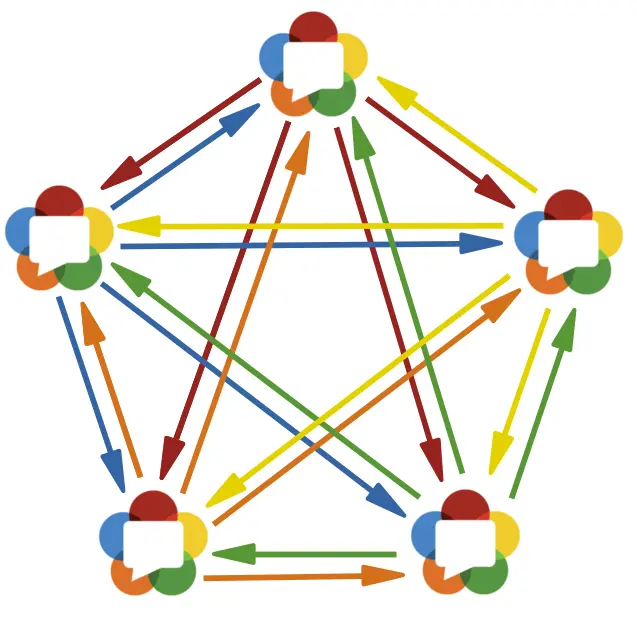

WebRTC Multiparty Topologies

Mesh Topology

Mesh is the simplest topology for a multiparty application. In this topology, every participant sends and receives media from all other participants. We said it is the simplest because it is the most straightforward method. Moreover, there is no tricky work and a central unit, such as a WebRTC server.

Pros:

- It requires only basic WebRTC implementation.

- Since each participant connects to the other peer-to-peer, no need for a central server.

Cons:

- Only a restricted number of participants (nearly 4-6) can connect.

- Since each participant sends media to each other, it requires N-1 uplinks and N-1 downlinks.

Mixing Topology and WebRTC MCU

Mixing is another topology where each participant sends its media to a central server and receives media from the central server. This media may contain some or all of the other participants’ media. This central server is called the MCU.

Pros:

- Client-side requires only basic WebRTC implementation.

- Each participant has only one uplink and one downlink.

Cons:

- Since the MCU server decodes and encodes each participant’s media, it requires high processing power.

Routing Topology and SFU

Routing is a multiparty topology where each participant sends its media to a central server and receives all other media from the central server. This central server is called SFU.

Pros:

- SFU requires less processing power than MCU.

- Each participant has one uplink and four downlinks.

Cons:

- SFU requires a more complex design and implementation on the server-side.

You can check here to get more information.

Advanced WebRTC Server Features

Transcoding

Transcoding is the process of decoding compressed media, changing something on it, and then re-encoding it. Change is the keyword of this process. What can be changed in the media?

First, you can change the codec since some codecs are compatible with protocols or players.

Moreover, transrating is one change that affects the bit rate of media. For example, changing the media bitrate from 600kbps to 300kbps.

Another change is trans-sizing, which is in the size of the media. For example, changing the frame size of a media from 1280×720 (720p) to 640×480 (480p) is trans-sizing.

Besides, there are lots of other changes or filtering processes available in the video processing area.

Adaptive Bitrate

Adaptive bitrate streaming is the adjustment of video quality according to the network quality. In other words, if the network quality is low, then the video bitrate is decreased by the server.

This is necessary to provide uninterrupted streaming under low-quality network connections. The different bitrates of the stream must be available to provide an adaptive bitrate technique.

One way to have different bitrates of the stream is transrating. Namely, the server produces different streams with different bitrates from the original stream. However, transrating is expensive in terms of processing power.

Simulcast

One alternative to transrating to provide adaptive bitrate is simulcast. In this technique, the publisher sends multiple streams with different bitrates instead of one stream. The server selects the best stream for the clients by considering the network quality.

With the change and development of communication needs in the world, the curiosity and interest in WebRTC and, therefore, WebRTC servers are increasing.

To meet this interest and need, Ant Media Server is becoming a more powerful WebRTC streaming engine, offering new and promising features for WebRTC video streaming every day.

How to set up a WebRTC Server?

Let’s get started with setting up a free open-source WebRTC server using the Ant Media community edition. With this setup, you can begin live streaming via WebRTC for broadcasting and HLS for playback.

For an alternative option, you have the choice to explore the 14-day free trial license available with the Ant Media Server enterprise edition. This will enable ultra-low latency live streaming utilizing WebRTC for both publishing and playing. Immerse yourself in real-time communication with latency as low as sub-0.5 seconds

Either way, there are no streaming or viewer limits. This is a unique opportunity, especially for people with limited use of the WebRTC streaming server.

Frequently Asked Questions

Do I need a server for WebRTC?

Yes, at a minimum, for signaling and NAT traversal. Media servers are optional for P2P but required for multiparty setups.

What’s the difference between SFU and MCU in WebRTC servers?

An SFU (Selective Forwarding Unit) routes streams without altering them and is more scalable, while an MCU (Multipoint Control Unit) mixes multiple incoming streams into one, which is less scalable but easier for clients to receive.

Is Ant Media Server open-source?

Yes! The Community Edition is open-source and free to use.

Can I use WebRTC with WordPress?

Yes, you can embed Ant Media Server playback and publish URLs in WordPress and other platforms.

Are WebRTC servers secure?

Yes. WebRTC servers operate with secure protocols like DTLS and SRTP, and signaling and media servers should be configured with HTTPS/TLS and proper firewall rules to ensure end-to-end security.

Conclusion

WebRTC servers are essential components in building scalable, low-latency, real-time video and audio applications. Whether you’re hosting a multiparty video conference, a live webinar, or a streaming platform, the right server setup — with tools like Ant Media — makes a huge difference.

Estimate Your Streaming Costs

Use our free Cost Calculator to find out how much you can save with Ant Media Server based on your usage.

Open Cost Calculator