Live streaming is an emerging technology that lets you watch, create and share video content online in real-time. Live streaming is made of two words “live” and “streaming”. The meaning of “live” is self-understood, it’s something happening in real time.

Let’s understand the term “streaming” first. In simple words, streaming is a process of transmitting data in chunks over the internet.

What is live streaming?

Live Streaming is the method of transmitting video content in real-time over the internet. Basically, it is a method for transferring digital video signals live, usually under an accepted latency.

The latest technologies have made live streaming easier. With one click, we can witness the event happening on the other side of the world. Be it a live match, virtual event, concert, or live class, everything is available with just one click.

But, have you ever wondered how it actually works internally? In this article, we will be digging deep into the technical aspects of live video streaming.

We will take a real example and understand each step. Suppose, AntMedia is conducting a talk on improving the live streaming quality. And we are supposed to live stream the event to people from all over the world.

How does live streaming work?

While enjoying live broadcasts, we have at least once thought about how these broadcasts work. Live streaming is usually a 5-step process:

- Getting the stream from a video camera

- Encoding the stream

- Sending the stream to end nodes

- Processing on a global CDN or edge nodes

- Decoding & playing the video

Let’s dive a little deeper to understand all these.

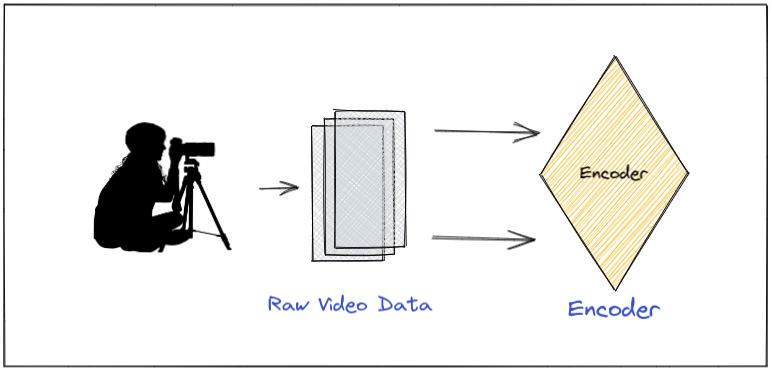

Getting the stream from a video camera

The very first step is, capturing video from the camera device. A-frames of video are captured and converted to the video signals (0s-1s format) which will be converted and used later for streaming. This is just raw data that is later refined to use.

The most popular use case of this is concerts, live matches, online classes or virtual events, etc.

Encoding the stream

From the camera, we get the raw data which is not quite manageable. This data is really large and almost impossible to stream over the internet. So, to convert it to a streamable format, we use an encoder. This step consists of 2 steps, compression and converting raw data to a new format. The encoder does a set of optimizations on the content to make it streamable over the internet.

Compression

The raw video data might have some useless visual info. For example – while we are capturing the conference, the speaker is standing in front of a black curtain. So, we can eliminate the re-rendering of the black background in order to optimize it. So, the encoder’s job is to smartly remove the redundant visuals and only keep the parts that change frames to frames. It uses codecs like H.264, H.265, AV1, etc. So, it reduces the size of the data by removing duplicates and still frames.

Encoding

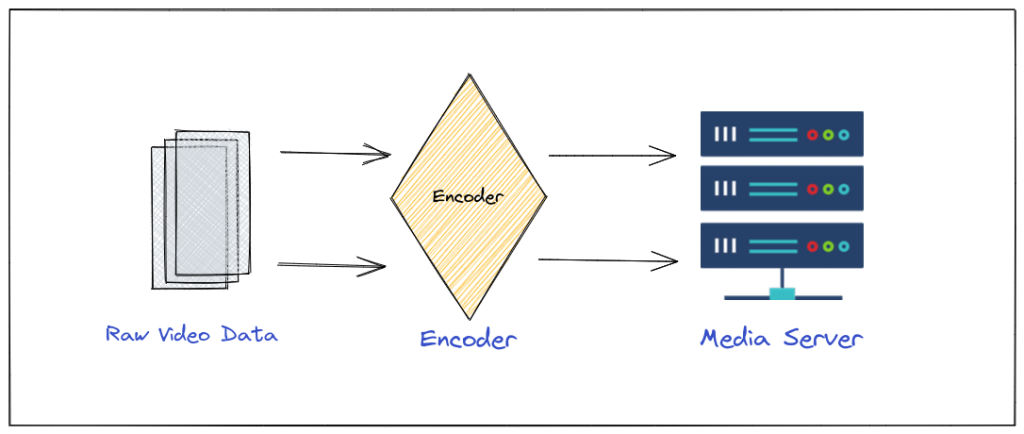

In the next step, the encoder packages the data into a protocol like RTMP (Real-time messaging protocol) so that it’s streamable over the internet.

Sending the stream to end nodes

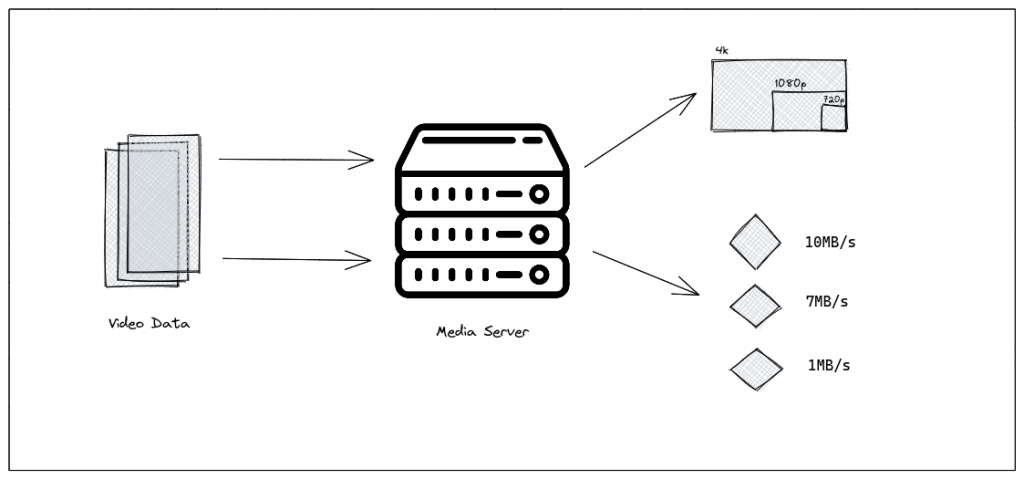

Once the data has been segmented into small chunks, compressed, and encoded, it’s transferred to the media streaming server, such as Ant Media. It is responsible for creating multiple versions/types of data as per the client device’s need.

To ensure a better experience across all devices, the server can transcode the data to a different codec. It can make different versions of the data with different bit rates. Also, it creates multiple versions of the data for different resolutions (1080p, 720p, etc).

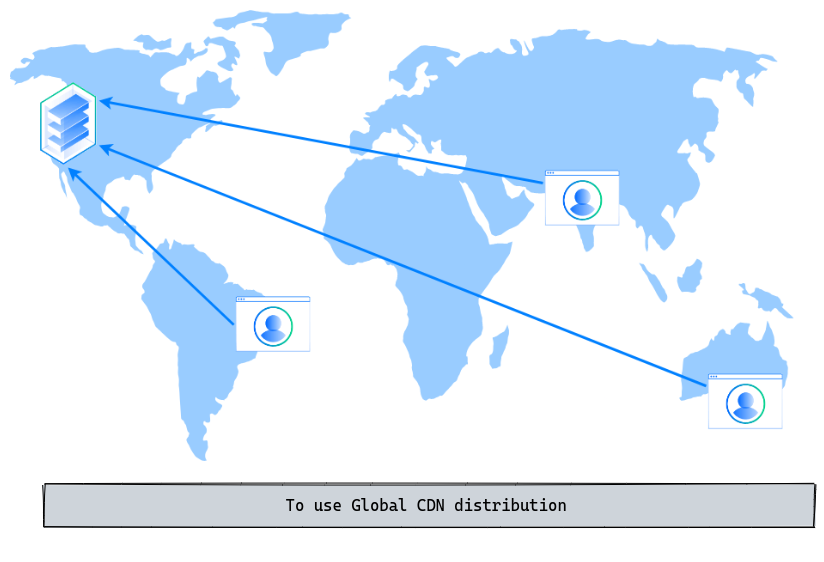

Processing on a global CDN or edge nodes

To ensure low-latency and high-quality live stream delivery, we use the power of global CDNs. Simple, CDN is a geographically distributed group of servers that caches and delivers the content on behalf of the origin server. It speeds up the delivery of the data by serving it from the server nearest to you. It reduces the load on the main server and drastically improves the latency.

It is not a required step. We can serve the content from our media server as well but usually, it’s recommended to serve the content via CDN. There are other reasons also to use the CDN for streaming instead of the origin server directly like bandwidth bottleneck, reducing RTT (round-trip time), etc.

Decoding & playing the video

All client devices receive the streams over the CDN. Users can be using any of the devices like an internet browser, any video player mobile app, etc. The decoders inside these devices repeat the initial steps in reverse order. It decodes the data and decompresses it to get the original quality.

Remember, our media server converted the video segments to chunks of multiple resolutions and bit rates. It is used here to provide a good user experience based on the user’s internet speed.

Conclusion

In this blog post, we have learned about the magic behind live streaming. We can dig deeper into each of the components to understand it better but this is fair enough to give you an idea of how live video streaming works exactly. From capturing the video raw data, passing it through the encoder to make it streamable, transcoding the video signals to multiple formats to ensure a better user experience, serving over the global CDN, a client receiving the video signals and the video player playing the video content on this device, this flow is the high-level architecture of live streaming.

Ant Media Server for real-time live streaming

Ant Media provides ready-to-use, highly scalable real-time video streaming solutions for live video streaming needs. This solution provides live video streaming capabilities, deployed easily and quickly on-premises or on public cloud networks such as AWS, Azure, Alibaba and others.

Ant Media’s well-known product, called Ant Media Server, is a video streaming platform and technology enabler, providing highly scalable, ultra-Low Latency (WebRTC) and low latency (CMAF & HLS) video streaming solutions, with a dashboard to manage all streaming needs.

With Ant Media Server, you can make real-time live streams and even easily set up your own live-streaming platform. Moreover, you can distribute your broadcasts to social media platforms at the same time and reach much more viewers.

In our guest blog post, Pankaj Tanwar gives an overview of live streaming and how it works. Pankaj develops software and writes blogs, some of which are based on video streaming, and is active on Twitter. Opinions expressed by Ant Media contributors are their own.

Useful links for streaming heroes:

WebRTC Servers

RTMP Guide

RTSP Guide

HLS Guide

CMAF Guide

Android SDK

iOS SDK

Flutter SDK

React Native SDK

Unity SDK

WebRTC to HLS & DASH

Ultra-Low Latency Streaming Uses Cases